Choosing the right online tool can feel like searching for a hidden gem. Sometimes the first option you find leaves you wanting more or just does not quite fit your needs. Newer platforms are popping up with features that make people curious and hopeful for a better experience. Are there solutions out there that offer a friendlier interface, unique functions, or better value? It can be surprising how different each alternative feels. The next list reveals some fresh options that might change the way you look at online tools.

Table of Contents

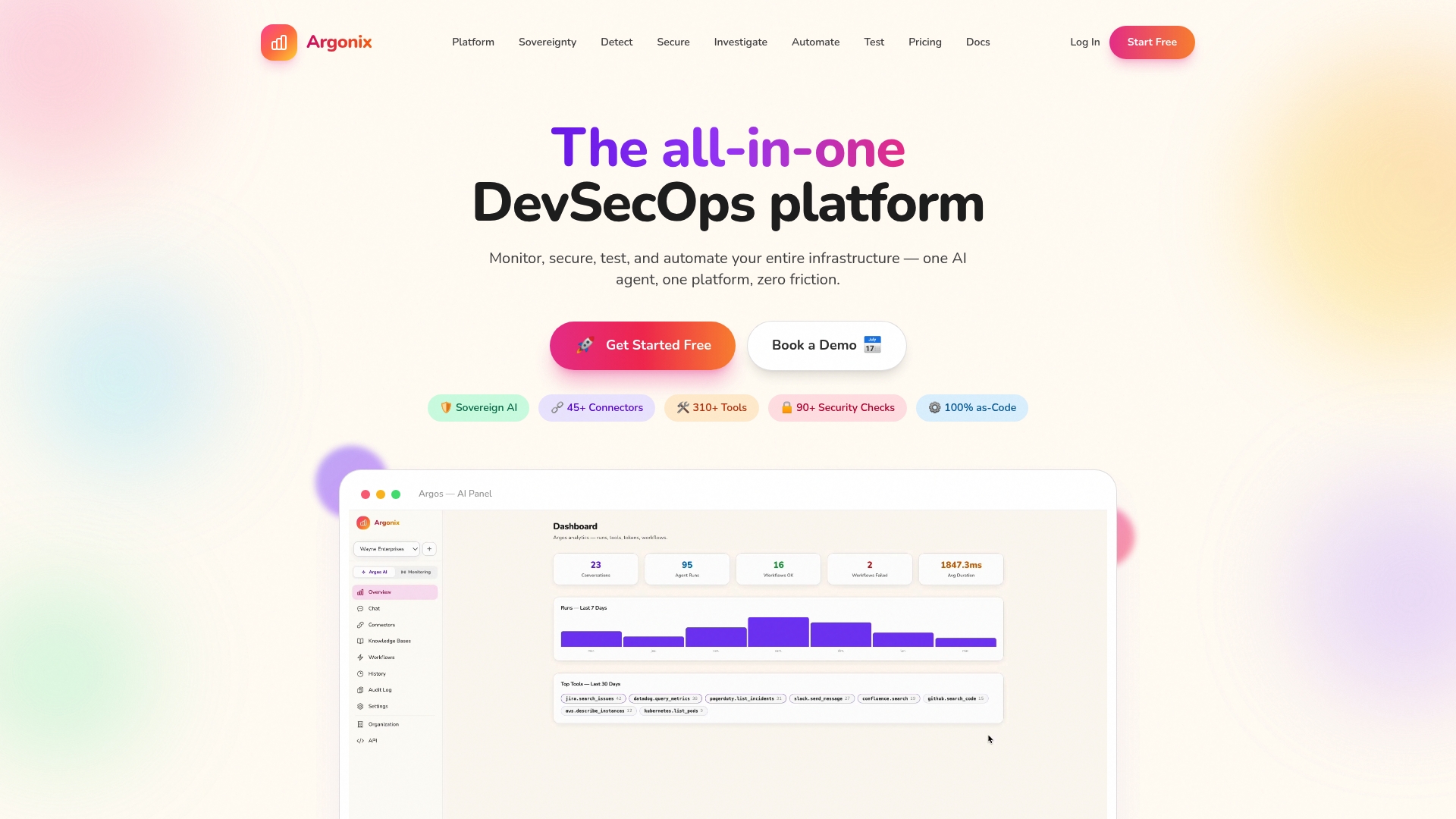

Argonix

At a Glance

Argonix is a leading, all in one DevSecOps platform that combines monitoring, security, testing, and automation with AI driven tooling. Its standout strength is data sovereignty through a local LLM by default while providing broad integrations and clear pricing.

Actionable takeaway: Try the free tier to validate local LLM behavior on your infrastructure.

Core Features

Argonix unifies monitoring, incident response, and automation with five core capabilities that matter for cloud operations. It runs a local LLM by default so sensitive logs and signals stay inside your environment while we automate common investigations and remediations.

The platform connects to 45+ tools and services for monitoring, security, and automation and includes pre built workflows, multiple monitor types for service health checks, and full DevSecOps security with CSPM and MITRE ATT&CK mapping.

Actionable takeaway: Map one critical service to Argonix connectors and validate automated workflows in a staging window.

Pros

- Local LLM with retained data keeps sensitive telemetry inside your infrastructure and reduces exposure in shared cloud models.

- Wide integration surface lets you pull metrics and alerts from many observability and CI tools for a single pane view of incidents.

- Automated root cause investigation and remediation reduces mean time to resolution by performing repeatable checks and action steps automatically.

- Flexible deployment options let you run Argonix on premises or in the cloud to match your security posture and compliance needs.

- Transparent pricing and free tier make it easy to pilot the platform before committing to scaled plans.

Actionable takeaway: Prioritize connectors and automated workflows that address your top three incident types.

Who It's For

Argonix is built for DevOps, SRE, and cybersecurity teams in mid sized to large organizations that need integrated monitoring, security, and automation with AI capabilities. It fits teams managing complex services where data control, automated investigations, and security mapping to MITRE ATT&CK are high priorities.

Actionable takeaway: Use Argonix when you need a single platform to reduce operational fragmentation across teams.

Unique Value Proposition

Argonix sets the standard by combining local LLM data sovereignty, deep connector coverage, and DevSecOps security that maps to MITRE ATT&CK. That combination gives sophisticated buyers a single operational plane for detection, investigation, and remediation while preserving data control.

Smart buyers pick Argonix because it reduces vendor sprawl, enforces consistent automated playbooks, and supports flexible deployment models. The transparent pricing with a free tier lets teams validate outcomes before scaling, and the pre built workflows accelerate time to value for common DevOps tasks.

Actionable takeaway: Validate Argonix against one regulatory or compliance requirement to see how local LLM helps with data governance.

Real World Use Case

A company monitoring a microservices architecture uses Argonix to detect degraded service health, run automated root cause investigations, and apply remediation steps for common failure modes. The team retains all telemetry locally with the LLM and documents security findings against MITRE ATT&CK for audit trails.

Actionable takeaway: Replicate a recent incident in a sandbox and measure MTTD and MTTR improvements with Argonix.

Pricing

Argonix offers a free tier at €0 per month, a Startup plan at €10 per month, a Pro plan at €200 per month, and custom enterprise options for large scale needs. Plans scale with connectors, agents, and support levels.

Actionable takeaway: Start on the free tier and upgrade to Pro when you need expanded connectors and enterprise features.

Website: https://argonix.io

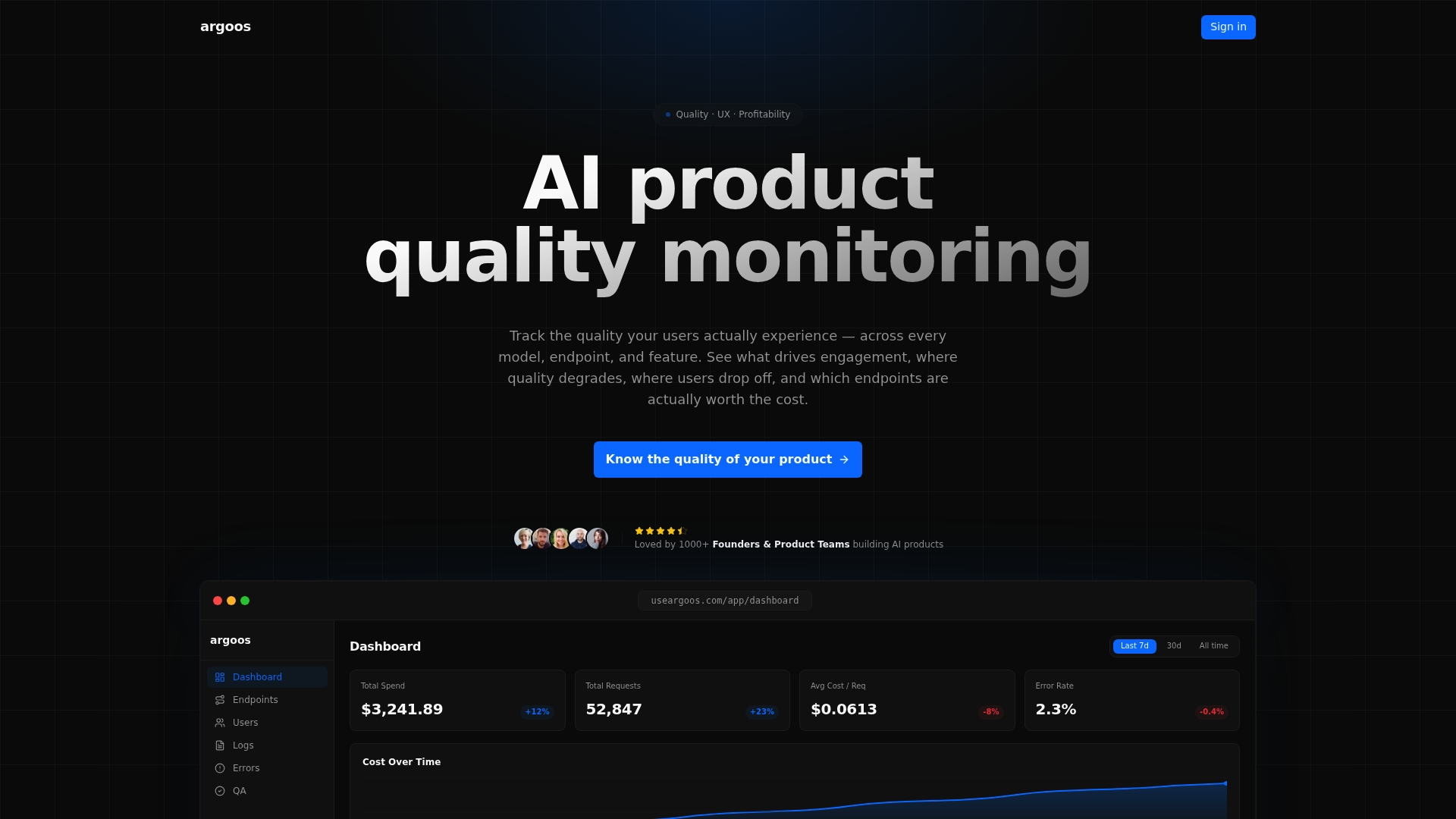

Argoos

At a Glance

Argoos is an AI product quality monitoring platform that tracks and analyzes AI responses across models and endpoints in real time. It delivers actionable alerts for regressions while giving teams visibility into user experience and cost drivers.

Core Features

Argoos offers real time quality monitoring, regression detection and alerts, cost and engagement analysis by endpoint, and user level insights that plug into existing AI workflows with a single tracking call. Integration requires no infrastructure setup.

Pros

-

Comprehensive real time insights: The platform provides continuous visibility into response quality so teams can spot issues before customers notice.

-

No infrastructure required: You can integrate Argoos with minimal setup which lowers operational overhead for DevOps teams and reduces deployment friction.

-

Multi provider support: Argoos supports multiple AI providers and models so you can compare performance across endpoints from one console.

-

Flexible pricing tiers: Plans include a free tier and options for scaling which helps small teams start monitoring quickly without upfront cost.

-

User level tracking: The product surfaces per user metrics that link engagement and quality which aids targeted troubleshooting and UX improvements.

Cons

-

Limited deep customization: The product documentation does not show extensive options for advanced analytics custom models or highly tailored dashboards which may limit power users.

-

Monitoring focus over development tooling: Argoos centers on quality monitoring rather than on model training or deployment tools which means teams need other platforms for development workflows.

-

Potential scale cost: Pricing above the free tier may become expensive at large scale depending on volume of endpoints and tracking calls.

Who It's For

Argoos fits product teams and AI developers responsible for AI features in production who need to measure user experience and control API costs. DevOps teams that manage multiple models or providers will find its consolidated monitoring useful.

Unique Value Proposition

Argoos combines quality metrics with cost signals and regression alerts in one place which shortens the feedback loop between observed user issues and remediation. That focus on both experience and spend distinguishes it from pure observability tools.

Real World Use Case

A company running GPT based chatbots uses Argoos to monitor response quality after model updates. The team detects a regression in answer relevance within hours and reroutes traffic while investigating, avoiding a larger drop in engagement.

Pricing

A free plan is available with limited features. The Pro plan starts at $49 per month for larger scale needs. Enterprise options come with custom pricing for high volume or dedicated support.

Website: https://www.useargoos.com/

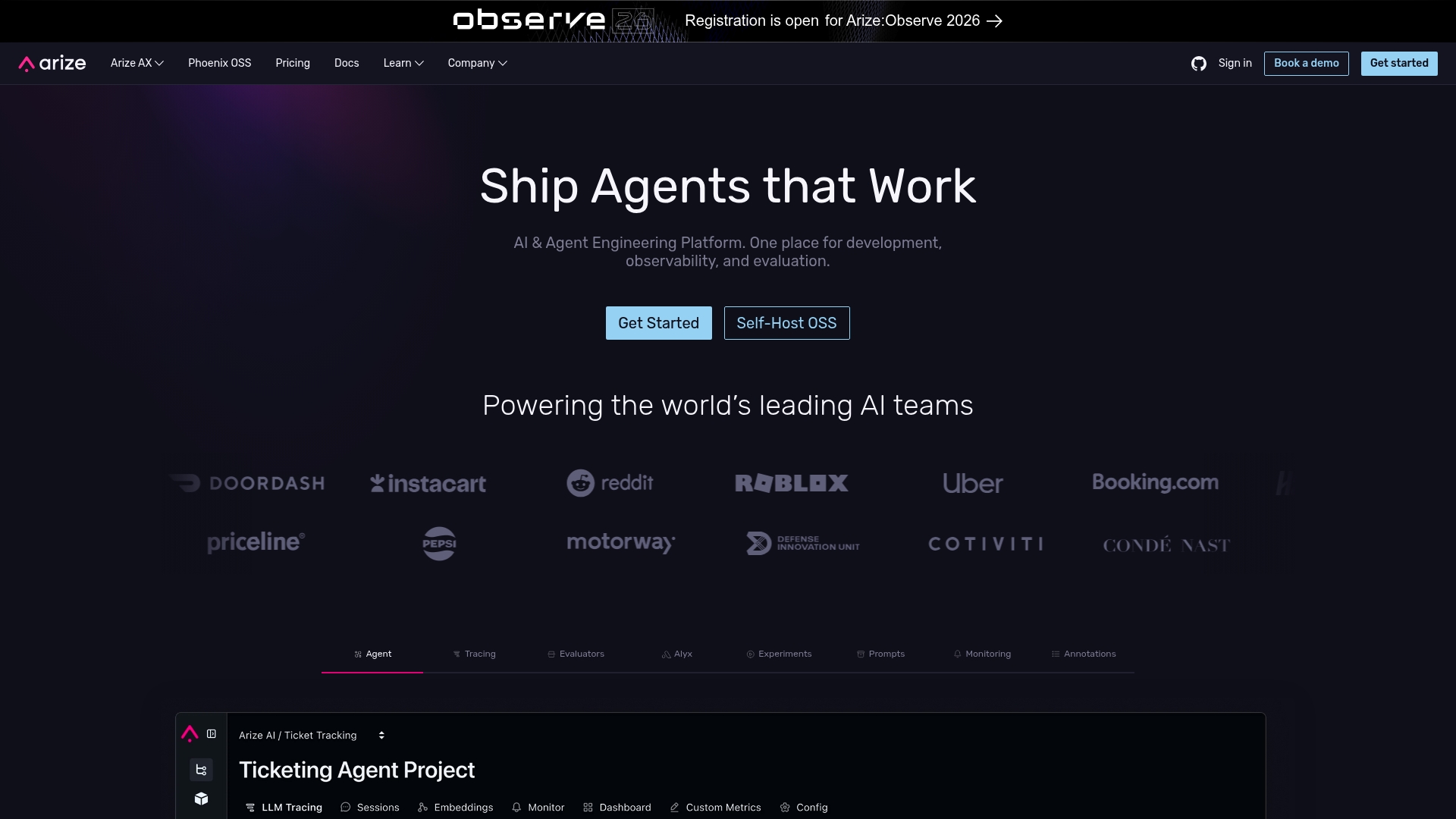

Arize AI

At a Glance

Arize AI delivers an enterprise grade platform for AI observability that focuses on monitoring, evaluation, and debugging of AI models and applications. It fits teams that need transparency across model performance with tools for real time analysis and diagnostics.

Core Features

Arize AI combines Arize AX for model observability and Alyx as an AI engineering agent for building and debugging AI systems. The platform lists model evaluation and debugging, open standards compatibility, and real time diagnostics as core capabilities.

Pros

- Enterprise scale support: Arize AI supports enterprise scale AI management which helps large teams govern models across many services and use cases.

- Open standards friendly: The platform emphasizes open standards and open source components which reduces vendor lock in and increases portability.

- Comprehensive toolset: The combined functions for monitoring, evaluation, and debugging provide a single place to manage model quality and performance.

- Transparency and control: The focus on open source components gives teams more visibility into how their tools operate and how data flows.

- Flexible deployment options: Arize AI offers both SaaS and self hosting options which supports different security and compliance requirements.

Cons

- High complexity for small teams: The platform’s breadth introduces complexity that small teams or beginners will find challenging to manage effectively.

- Pricing requires inquiry: Pricing details vary by plan and often require direct contact which makes initial cost comparison harder for procurement cycles.

- Advanced setup effort: Some advanced features demand substantial setup and integration work which extends time to value for teams without dedicated engineering resources.

Who It's For

Arize AI serves AI teams and enterprise organizations that need robust monitoring, evaluation, and debugging tools for production AI. It targets groups running many models or operating in regulated environments where visibility and control over model behavior matter.

Unique Value Proposition

Arize AI’s unique value is the combination of an observability platform with an engineering agent that supports end to end model evaluation and debugging while honoring open standards. That mix helps organizations avoid vendor lock in while maintaining enterprise level tooling.

Real World Use Case

A large tech company uses Arize AI to monitor their machine learning models in production, detect regressions early, and route issues to engineering teams for debugging. The platform provides real time diagnostics that shorten incident resolution windows.

Pricing

Pricing information is available on the Arize AI website and varies by plan. Options include free tiers, startup plans, and enterprise levels with tailored pricing that requires direct inquiry for exact quotes.

Website: https://arize.com

WhyLabs

At a Glance

WhyLabs delivers community driven open source tooling for AI observability and responsible AI operations. The original company has discontinued operations, yet its projects still provide practical tooling for logging and monitoring AI systems.

Takeaway: Use WhyLabs when you want community led observability with no licensing costs.

Core Features

WhyLabs centers on an open source AI observability platform built around data logging and model monitoring. The suite includes the whylogs open standard for data logging and langkit as a toolkit for monitoring large language models.

The projects emphasize privacy preserving logging and tooling to help teams track model behavior and data quality without vendor lock in.

Takeaway: The feature set is focused and purpose built for observability and privacy aware logging.

Pros

-

Open source contributions support responsible AI practices: The projects provide reusable code and standards that help teams implement transparent monitoring workflows.

-

Tools for privacy preserving logging and monitoring: WhyLabs components were designed to reduce exposure of sensitive data while retaining useful telemetry for troubleshooting.

-

Continued impact through community and standards development: Community maintenance and adopted standards extend the life and interoperability of the projects.

-

Supports monitoring and securing LLMs while preserving privacy: Langkit and whylogs target LLM use cases with telemetry designed for large model behavior analysis.

Takeaway: The strengths lie in community driven standards, privacy focus, and targeted LLM monitoring.

Cons

-

The company has discontinued operations, which may impact support and updates for the projects.

-

The platform now offers limited direct commercial offerings, so enterprises seeking packaged support may find gaps.

-

There is a potential lack of formal support or consulting services for users who need hands on integration help.

Takeaway: Expect a higher reliance on internal expertise or external consultants for production deployments.

Who It's For

WhyLabs fits organizations and teams developing or deploying AI systems that prioritize responsible AI, privacy, and observability. The ideal users include AI researchers, data scientists, and AI engineers who can integrate and maintain open source tooling.

Takeaway: Choose WhyLabs if your team can self manage open source solutions and values privacy preserving telemetry.

Unique Value Proposition

WhyLabs offers a focused set of open source projects and an open logging standard that let teams adopt consistent telemetry practices without vendor lock in. The combination of whylogs and langkit gives a lightweight path to monitor model health and data quality.

Takeaway: Its unique value is community owned standards for privacy aware AI observability.

Real World Use Case

A tech company uses the open source whylogs to enable privacy preserving logging for AI driven customer support chatbots. The setup provides compliance friendly telemetry while alerting engineers to model drift and performance regressions.

Takeaway: Practical for production bots where data privacy and model reliability matter.

Pricing

Open source, free to use.

Takeaway: No licensing cost for the software itself, but budget for integration and maintenance.

Website: https://whylabs.ai

Evidently AI

At a Glance

Evidently AI is an open source evaluation and observability platform built to test, monitor, and improve AI model quality and safety across updates. It targets teams running LLMs, RAG pipelines, and multi step AI workflows and offers both cloud and self hosted options.

Core Features

Evidently AI delivers LLM testing, RAG testing, adversarial checks, and continuous ML monitoring to track data drift and compliance. The platform also provides an open source Evidently Python library that teams can extend and embed into CI pipelines.

Evidently AI supports both cloud and self hosted deployment models so you can keep control of sensitive data while integrating with existing observability stacks. This makes it practical for regulated environments and distributed DevOps teams.

Pros

- Open source and flexible platform: The open source foundation lets your engineers inspect code, customize metrics, and integrate evaluation into pipelines without vendor lock in.

- Comprehensive evaluation metrics: Built in metrics cover quality, safety, drift, and retrieval accuracy so you can quantify regressions after model changes.

- Supports cloud and self hosted deployment: You can run the platform in the cloud for speed or self hosted to meet data sovereignty and compliance requirements.

- Suitable for LLMs, RAG, and AI workflows: The toolset addresses single prompt testing and multi step agent validation for complex production use cases.

- Active community and extensive resources: Public libraries and community examples speed adoption and reduce the time to implement reliable tests.

Cons

- Complex setup for self hosted enterprise deployments can extend time to production and require platform engineering effort to operate reliably.

- Requires understanding of evaluation metrics for effective use so teams without ML evaluation expertise face a learning curve to interpret results correctly.

Who It's For

Evidently AI fits AI teams, ML engineers, data scientists, and AI product teams that build or operate LLM based systems and need rigorous, repeatable validation. Choose it when you want open source extensibility and the option to host tooling inside restricted networks.

Unique Value Proposition

Evidently AI combines a focused set of evaluation tools with an open source library so teams can standardize tests, automate monitoring, and retain control of telemetry. The platform balances actionable metrics with deployability across cloud and self hosted environments.

Real World Use Case

A tech company uses Evidently AI to continuously monitor a production language model, detect data drift, and run adversarial tests before each release. The result is earlier detection of regressions and clearer signals for model retraining and rollback decisions.

Pricing

Evidently AI offers an open source version at no cost and commercial cloud and enterprise plans with custom pricing based on scale and support needs. Contact sales for enterprise quotes and deployment support.

Website: https://evidentlyai.com

Neptune.ai

At a Glance

Neptune.ai offers focused tools for experiment tracking and monitoring training that help research teams compare and understand model behavior at scale. It fits teams that run thousands of experiments and need searchable, real-time visibility into model runs.

Core Features

Neptune.ai provides experiment tracking, visual dashboards for comparing thousands of runs, and metrics analysis across layers to surface training issues. The platform integrates deeply into training stacks so teams log metrics, artifacts, and configuration without breaking existing workflows.

Pros

- Supports complex training workflows with features that accommodate multi-run experiments and layered metric analysis.

- Enables detailed experiment comparison so teams can identify the most effective model configurations quickly.

- Helps in understanding model behavior in real time by surfacing anomalies and training issues as they occur.

- Deep integration with training processes reduces friction when adding tracking into existing pipelines and CI.

- Facilitates data-driven decision making by storing run metadata, metrics, and visualizations for later analysis.

Cons

- Specific to AI and machine learning workflows, so it provides limited value for general DevOps or infrastructure automation teams.

- May require technical expertise to fully utilize because advanced tracking and integration demand familiarity with training stacks and tooling.

- Dependent on integration within training ecosystems, which means teams must invest time to connect their frameworks and pipelines.

Who It's For

Neptune.ai targets AI researchers, data scientists, and machine learning engineers who run experimental model development and iterative training. It suits teams that manage many experiments and need centralized logging, search, and comparison to accelerate research cycles.

Unique Value Proposition

Neptune.ai centralizes experiment metadata and training telemetry so teams replace ad hoc spreadsheets with searchable runs and reproducible records. Its value lies in enabling repeatable analysis across thousands of runs and exposing training problems early enough to reduce wasted compute.

Real World Use Case

A research team uses Neptune.ai to track and compare thousands of deep learning training runs, tagging hyperparameter sets and dataset versions. They identify optimal configurations faster and troubleshoot diverging runs by reviewing layer-wise metrics in near real time.

Pricing

The product data does not list pricing details and states that pricing information is not provided on the website. Teams should contact the vendor for plan options and enterprise licensing.

Website: https://neptune.ai

| {"type":"table","title":"AI Observability and Monitoring Tools Comparison","introduction":"Below is a comprehensive comparison of popular AI observability and monitoring tools, focusing on their unique features, use cases, and pricing information:","content":"Tool Name | Core Features | Pros | Cons | Pricing |

|---|---|---|---|---|

| Argonix | Local LLM, 45+ tools integrations, MITRE ATT&CK mapping | Secure local telemetry, Many connectors, Free tier | Requires infrastructure setup | From €0/month |

| Argoos | Real-time quality monitoring, Regression alerts | Minimal setup, Multi-provider support | Limited advanced customization | From $0/month |

| Arize AI | Model observability, Real-time diagnostics | Supports enterprises, Open standards compatible | Complex for small teams | Requires inquiry |

| WhyLabs | Open-source, privacy-preserving logging | Community-driven, Free to use | No direct support, Requires expertise | Free |

| Evidently AI | LLM evaluation, RAG testing | Open-source, Active community | Setup complexity for advanced use cases | Free |

| Neptune.ai | Experiment tracking, Real-time comparison | Detailed analytics, Deep integration | Domain-specific, Technical expertise required | Requires inquiry","conclusion":"Use this table to evaluate the tools based on their strengths and suitability for your specific operational requirements."} |

Elevate Your AI Monitoring with Argonix for Seamless Cloud Operations

If you are exploring alternatives to useargoos.com, the challenge often lies in managing AI-driven quality monitoring alongside comprehensive cloud operations without sacrificing data control and automation. Key pain points include fragmented toolsets, lack of local LLM data sovereignty, and limited automation for incident response and remediation. Argonix addresses these with its all-in-one DevSecOps platform that integrates over 45 connectors across cloud providers, observability tools, and CI/CD pipelines, combining AI agents with flexible deployment options.

Experience the power of local and cloud-based LLMs, automated root cause analysis, and infrastructure as code management through Terraform and Kubernetes. This enables your team to reduce tool sprawl, accelerate incident resolution, and maintain full control over sensitive data.

Discover how Argonix can transform your monitoring and automation workflows today.

Explore the future of unified cloud operations at Argonix. Start with our flexible platform to streamline your DevOps while preserving data sovereignty. Visit Argonix now to take control of your AI and infrastructure monitoring with confidence.

Frequently Asked Questions

What are the key features to look for in alternatives to useargoos.com?

When evaluating alternatives to useargoos.com, prioritize features such as real-time quality monitoring, integration simplicity, and cost analysis capabilities. Choose platforms that provide actionable alerts for performance regressions to ensure effective user experience tracking.

How can I assess the scalability of an alternative to useargoos.com?

To assess scalability, check if the platform can handle increasing volumes of data without significant performance issues. Run a pilot test by simulating multiple endpoints to see how it adapts, ideally within a 30-day trial period.

What should I consider regarding pricing when choosing an alternative?

Examine the pricing structure, including any free tiers and costs associated with scaling features. Look for transparent pricing that aligns with your budget needs to avoid unexpected expenses as you expand usage.

How can I ensure data privacy when using alternatives to useargoos.com?

Select platforms that emphasize data sovereignty and have features explicitly aimed at protecting sensitive information. Review their compliance with relevant privacy standards to assess their commitment to data safety.

What type of customer support can I expect from alternatives to useargoos.com?

Customer support varies widely, so verify the availability of support channels such as chat, email, or phone. Aim for platforms that provide dedicated support teams, especially if you plan to scale usage significantly.